Make no doubt about it, the EDC systems of 2020 are using a 1990’s design. (OK – granted, there are some innovators out there like ClinPal with their patient-centric trial approach but the vast majority of today’s EDC systems, from Omnicomm to Oracle to Medidata to Medrio are using a 1990’s design. Even the West Coast startup Medable is going the route of if you can’t beat them join them and they are fielding the usual alphabet soup of buzz-word compliant modules – ePRO, eSource, eConsent etc. Shame on you.

Make no doubt about it, the EDC systems of 2020 are using a 1990’s design. (OK – granted, there are some innovators out there like ClinPal with their patient-centric trial approach but the vast majority of today’s EDC systems, from Omnicomm to Oracle to Medidata to Medrio are using a 1990’s design. Even the West Coast startup Medable is going the route of if you can’t beat them join them and they are fielding the usual alphabet soup of buzz-word compliant modules – ePRO, eSource, eConsent etc. Shame on you.

Instead of using in-memory databases for real-time clinical data acquisition, we’re fooling around with SDTM and targeted SDV.

When in reality – SDTM is a standard for submitting tabulated results to regulatory authorities (not a transactional database nor an appropriate data model for time series). And even more reality – we should not be doing SDV to begin with – so why do targeted SDV if not to perpetuate the CRO billing cycle.

Freedom from the past comes from ridding ourselves of the clichés of today.

Personally – I don’t get it. Maybe COVID-19 will make the change in the paper-batch-SDTM-load-up-the-customer-with-services system.

So what is wrong with 1990s EDC?

The really short answer is that computers do not have two kinds of storage any more.

It used to be that you had the primary store, and it was anything from acoustic delay-lines filled with mercury via small magnetic dougnuts via transistor flip-flops to dynamic RAM.

And then there were the secondary store, paper tape, magnetic tape, disk drives the size of houses, then the size of washing machines and these days so small that girls get disappointed if think they got hold of something else than the MP3 player you had in your pocket.

And people still program their EDC systems this way.

They have variables in paper forms that site coordinators fill in on paper and then 3-5 days later enter into suspiciously-paperish-looking HTML forms.

For some reason – instead of making a great UI for the EDC, a whole group of vendors gave up and created a new genre called eSource creating immense confusion as to why you need another system anyhow.

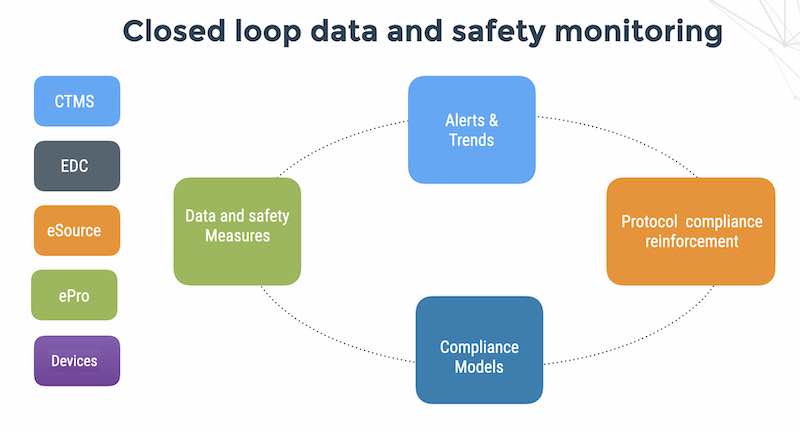

What the guys at Gartner euphemistically call a highly fragmented and non-integrated technology stack.

What the site coordinators who have to deal with 5 different highly fragmented and non-integrated technology stacks call a nightmare.

Awright.

Now we have some code – in Java or PHP or maybe even Dot NET THAT READS THE VARIABLES FROM THE FORM AND PUTS THEM INTO VARIABLES IN MEMORY.

Now we have variables in “memory” and move data to and from “disk” into a “database”.

I like the database thing – where clinical people ask us – “so you have a database”. This is kinda like Dilbert – oh yeah – I guess so. Mine is a paradigm-shifter also.

Anyhow, today computers really only have one kind of storage, and it is usually some sort of disk, the operating system and the virtual memory management hardware has converted the RAM to a cache for the disk storage.

The database process (say Postgres) allocate some virtual memory, it tells the operating system to back this memory with space from a disk file. When it needs to send the object to a client, it simply refers to that piece of virtual memory and leaves the rest to the kernel.

If/when the kernel decides it needs to use RAM for something else, the page will get written to the backing file and the RAM page reused elsewhere.

When Postgres next time refers to the virtual memory, the operating system will find a RAM page, possibly freeing one, and read the contents in from the backing file.

And that’s it.

Virtual memory was meant to make it easier to program when data was larger than the physical memory, but people have still not caught on.

And maybe with COVID-19 and sites getting shut-down; people will catch on that a really nifty user interface for GASP – THE SITE COORDINATORS and even more AMAZING – a single database in memory for ALL the data from patients, investigators and devices.

Because at the end of the day – grandma knows that there ain’t no reason not to have a single data model for everything and just shove it into virtual memory for instantaneous, automated DATA QUALITY, PATIENT SAFETY AND RISK ASSESSMENT in real-time.

Not 5-12 weeks later for research site visit or a month later after the data management trolls in the basement send back some reports with queries and certainly not spending 6-12 months cleaning up unreliable data due to the incredibly stupid process of paper to forms to disk to queries to site visits to data managers to data cleaning.