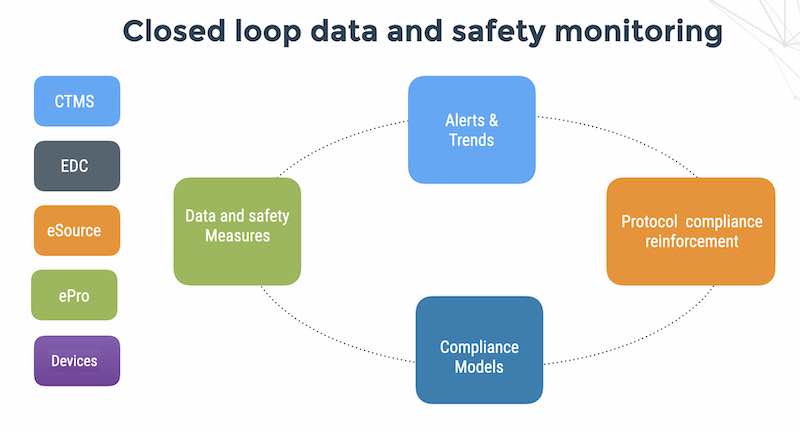

Flaskdata.io helps Life Science CxO teams outcompete using continuous data feeds from patients, devices and investigators mixed with a slice of patient compliance automation.

One of the great things about working with Israeli medical device vendors is the level of innovation, drive and abundance of smart people.

It’s why we get up in the morning.

There are hundreds of connected medical devices and digital therapeutics (last time I checked over 300 digital therapeutics alone).

When you have an innovative device with network connectivity, security and patient privacy, availability of your product and integrity of the data you collect has got to be a priority.

Surprisingly, we get a range of responses from people when we talk about the importance of cyber security and privacy for clinical research,

Most get it but some don’t. The people that don’t get it, seem to assume that security and privacy of patient data is someone else’s problem in clinical trials.

The people who don’t work in security, assume that the field is very technical, yet really – it’s all about people. Data security breaches happen because people or greedy or careless. 100% of all software vulnerabilities are bugs, and most of those are design bugs which could have been avoided or mitigated by 2 or 3 people talking about the issues during the development process.

I’ve been talking to several of my colleagues for years about writing a book on “Security anti-design patterns” – and the time has come to start. So here we go:

Security anti-design pattern #1 – The lazy employee

Lazy employees are often misdiagnosed by security and compliance consultants as being stupid.

Before you flip the bozo bit on a site coordinator as being non-technical, consider that education and technical aptitude are not reliable indicators of dangerous employees who are a threat to the clinical trial assets.

Lazy employees may be quite smart but they’d rather rely on organizational constructs instead of actually thinking and executing and occasionally getting caught making a mistake.

I realized this while engaging with a client who has a very smart VP – he’s so smart he has succeeded in maintaining a perfect record of never actually executing anything of significant worth at his company.

As a matter of fact – the issue is not smarts but believing that organizational constructs are security countermeasures in disguise.

So – how do you detect the people (even the smart ones) who are threats to PHI, intellectual property and system availability of your EDC?

1 – Their hair is better organized then their thinking

2 – They walk around the office with a coffee cup in their hand and when they don’t, their office door is closed.

3 – They never talk to peers who challenge their thinking. Instead they send emails with a NATO distribution list to everyone on the clinical trial operations team.

4 – They are strong on turf ownership. A good sign of turf ownership issues is when subordinates in the company have gotten into the habit of not challenging the VP coffee-cup holding persons thinking.

5 – They are big thinkers. They use a lot of buzz words.

6 – When an engineer challenges their GCP/regulatory/procedural/organizational constructs – the automatic answer is an angry retort “That’s not your problem”.

7 – They use a lot of buzz-words like “I need a generic data structure for my device log”.

8 – When you remind them that they already have a generic data structure for their device log and they have a wealth of tools for data mining their logs – amazing free tools like Elasticsearch and R….they go back and whine a bit more about generic data structures for device logs.

9 – They seriously think that ISO 13485 is a security countermeasure.

10 – They’d rather schedule a corrective action session 3 weeks after the serious security event instead of fixing it the issue the next day and documenting the root causes and changes.

If this post pisses you off (or if you like it), contact me – always interested in challenging projects with challenged people who challenge my thinking.